Object detection in 2D images has made significant progress with the advent of modern computer vision (CV) algorithms. However, detecting objects in 3D instead of 2D space is a more challenging yet more realistic topic when building perception functions for autonomous driving systems. An autonomous vehicle (AV) needs to perceive the objects present in the 3D scene from its sensors for motion planning. A series of solutions based on LiDAR are proposed in existing CAV systems to provide 3D location information. However, they all face a common challenge: limited computing resources on the edge. To address the challenge and enable reliable real-time localization services for the CAVs, this project proposed a smart roadside unit (RSU) for driving or parking assistance with advanced CV technologies. The sensor is a multi-source traffic sensing device by UW STAR Lab. It can transmit data through 4G/5G data plan or Long Range (LoRa) and Narrow Band Internet of Things (NB-loT) data communication protocols. This device is tested for both data analysis and communication with vehicles in this research.

The team developed a cooperative perception framework that achieves accurate 3D vehicle localization based on single images for driving or parking purposes. The customized CV algorithm based on Mask-RCNN is implemented on the IoT device for 2D car keypoints detection in the image or video data collected by the monocular cameras. Merging with the Car Keypoints Prediction (CKP) algorithm, the Depth Detection Algorithm (DDA) developed by the team can extract the third-dimension information (Z coordinates) through the detected car keypoints. The fusion algorithm then integrates the Mask-RCNN Model and DDA results to provide more reliable localization services to all the vehicles in the sensing range. As a result, the proposed 3D vehicle localization system can 1) provide more reliable vehicle localization information; 2) increase calculation efficiency to reduce the information delay; 3) serve more vehicles in a more extensive sensing range.

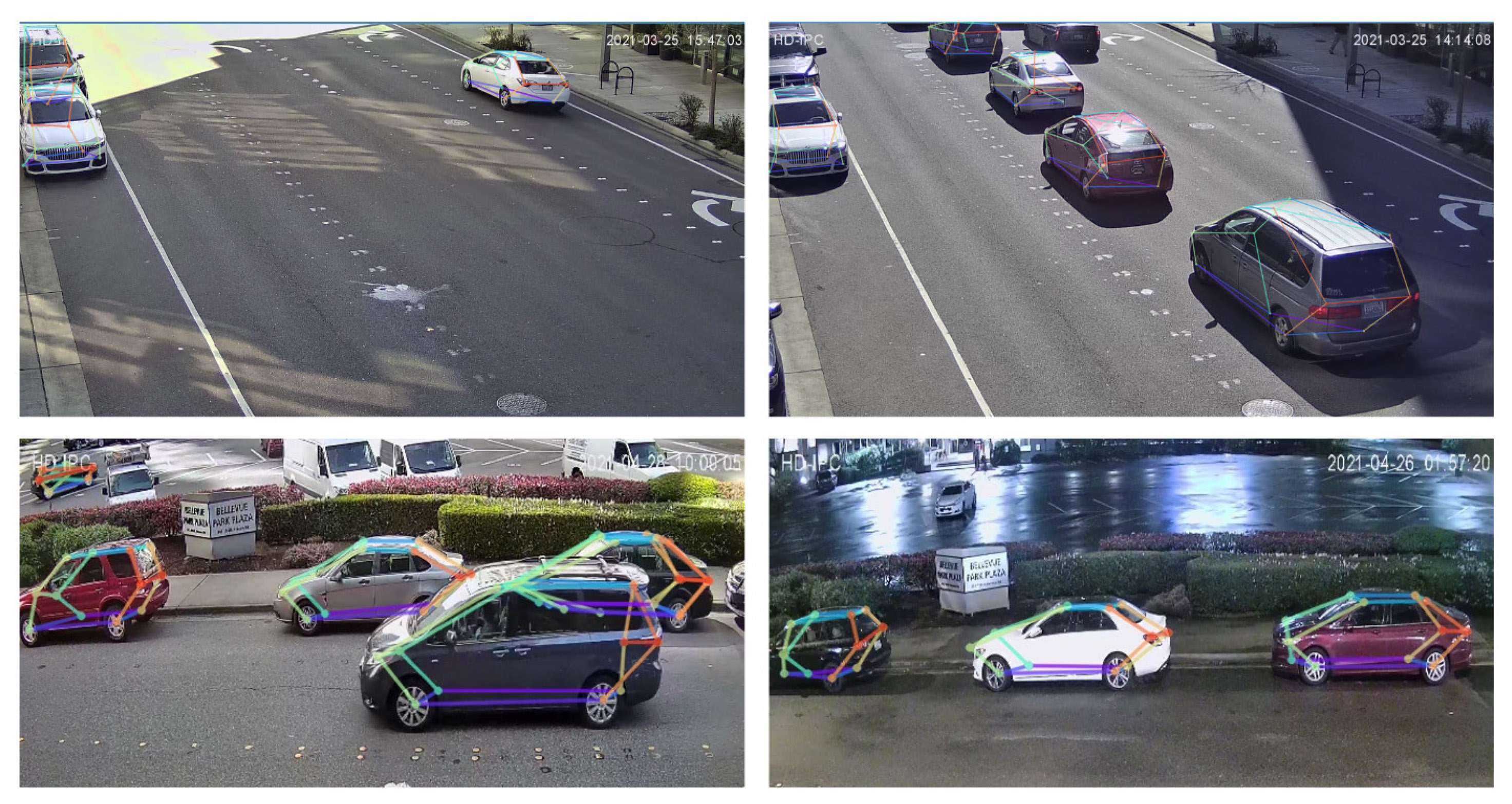

Figure 1. Example Results of 3D-SISS on Self-collected Data in Bellevue Test Bed.

Related Publication

Wang, Y., Sun, W., Liu, C., Cui, Z., Zhu, M., & Pu, Z. (2021). Cooperative Perception of Roadside Unit and Onboard Equipment with Edge Artificial Intelligence for Driving Assistance.